“Why not just use ChatGPT, Claude, Copilot etc?” Or "Which team member is best to start using Clinials"

Fair questions. My answer is usually "awesome that you have empowered your team members using these generic AI tools, and they are also the very same people who will love and should be using Clinials "

But lets re-ask this question based on our current under resourced and overburdened teams?

Why not just use ChatGPT? Or, more pointedly, “Which team member should we train to do prompting well?”

The hidden cost of prompting

Most teams underestimate what it actually takes to operationalise tools like ChatGPT or Claude in a clinical trial environment:

Time spent learning how to prompt effectively

Iteration cycles to get usable outputs

Rework due to inconsistency or missing context

Manual validation against the protocol

Variability across team members, sites, and regions

You don’t just pay for the tool. You pay for:

Training

Trial and error

Quality control

Rework downstream

And critically, none of that scales cleanly. Two coordinators using the same tool will produce two different outputs.

That variability is where timelines slip.

Cheaper” rarely is. Yes, general-purpose AI tools appear cheaper on paper. But in practice, the cost model looks like this:

Low subscription cost

High human effort

High variability

Ongoing supervision required

That’s not efficiency. That’s hidden labour.

Where Clinials shifts the equation

Clinials removes the need to “figure out prompting” entirely. Not because prompting isn’t powerful, but because teams shouldn’t have to become prompt engineers to run a trial.

Instead:

Protocols go in

Structured, workflow-aligned outputs come out

Consistently, every time

No interpretation layer. No variability between users.

The real comparison

ChatGPT or Claude or Copilot helps individuals think faster.

Clinials helps teams execute faster. That distinction matters.

Because clinical trials don’t fail on thinking, they fail on execution:

Misinterpreted protocols

Inconsistent site processes

Rework across documents and teams

What changes with Clinials

The impact shows up quickly:

No prompt engineering or setup time

Deterministic extraction of protocol-critical content

Outputs mapped to real clinical workflows, not generic text

Built-in traceability with citations back to the protocol

Consistency across teams, studies, and regions

In trials, speed isn’t about generating words, It’s about reducing rework, interpretation risk, and downstream delays.

The strategic question isn’t “which tool?”

It’s:

Do you want your team spending time learning how to ask better questions…or moving faster with answers that are already structured, governed, and usable?

Exec Summary

ChatGPT is general-purpose.

Clinials is single tenanted, secured, structured, repeatable, workflow-aware, and designed for regulated use, where context, consistency and governance matter. There's no sharing of data between accounts and there's no training on protocols.

ChatGPT is powerful. But in clinical trials, power without precision slows you down.

ChatGPT is a generalist. Clinials is purpose-built.

ChatGPT helps individuals think faster.

Clinials helps teams execute faster.

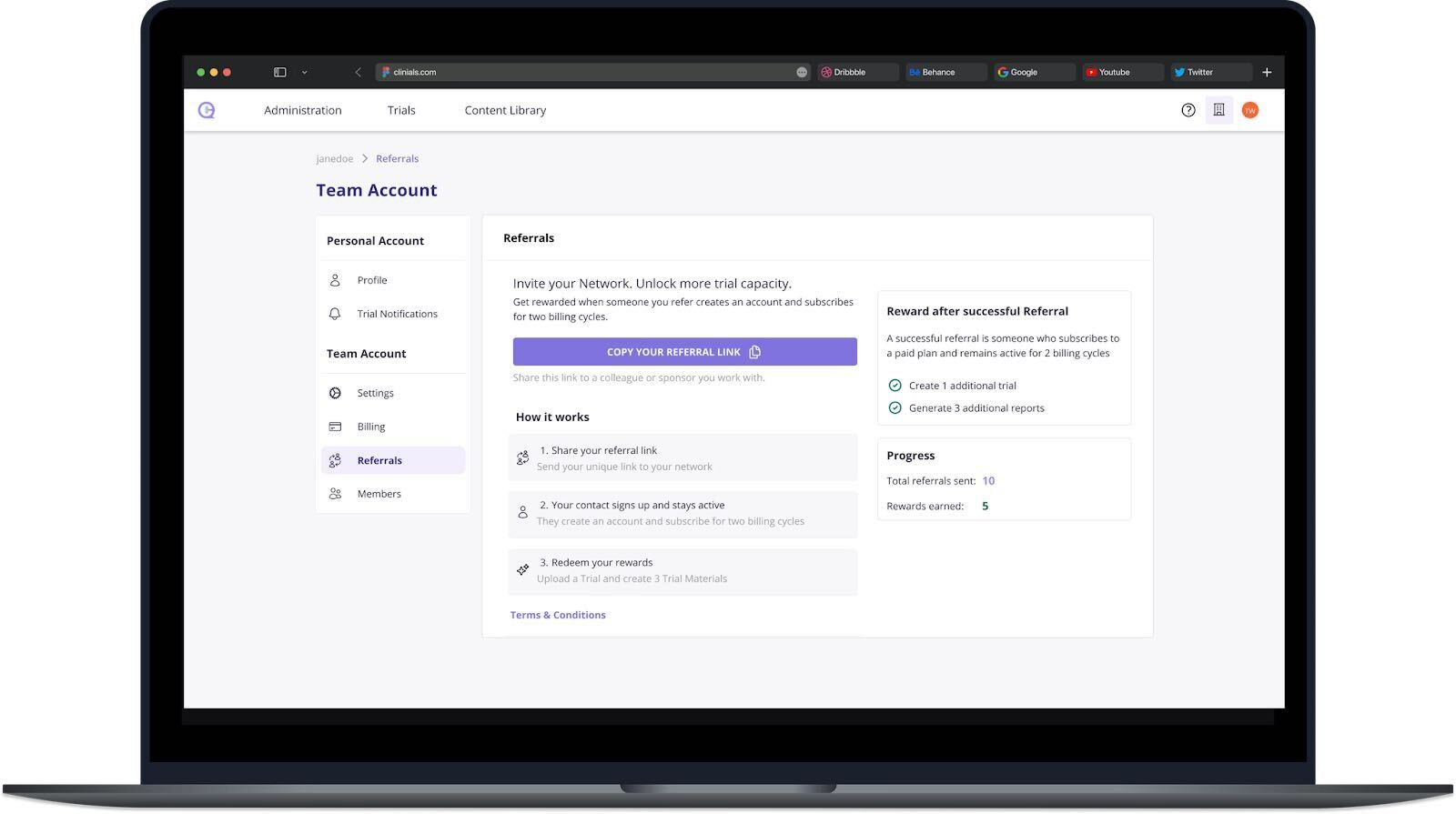

Clinials demonstrates instant ROI, with a low cost monthly subscriptions removing usage barriers for small sites

That’s why Clinials isn’t “ChatGPT for clinical trials.”

It’s infrastructure for clarity, so sites, sponsors, and patients can move with confidence. Generate clinical trial content from the protocol in minutes, not days.

AI will keep getting louder this year.

Execution is what will separate signal from noise.