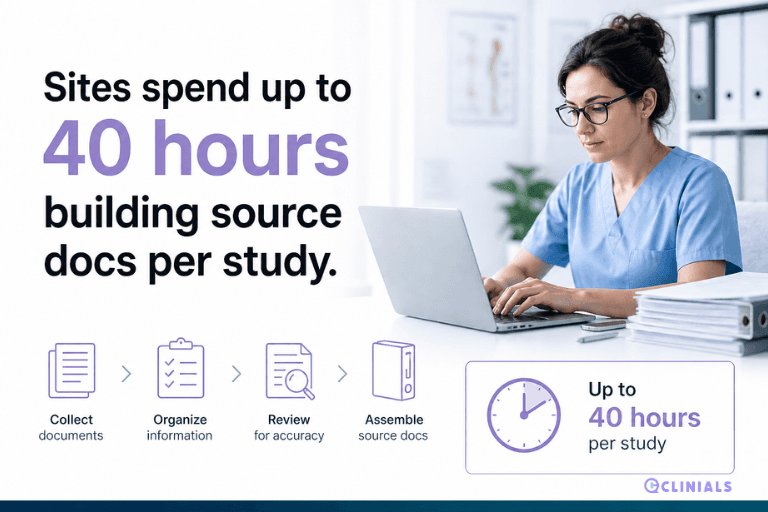

Building Source Documents Takes a Coordinator Up to 40 Hours Per Study. A Tufts CSDD Study Just Confirmed It. Clinials Is Fixing It.

For the first time, a rigorous academic study has quantified what investigative site coordinators have always known: source document preparation is not a minor administrative task. It is one of the most time-consuming, inconsistent, and risk-laden steps in study startup, and it has been entirely unsupported by technology until now.

A collaborative study conducted by the Tufts Center for the Study of Drug Development with CRIO contacts, drawing on a global survey of 209 investigative sites, has identified protocol interpretation and source document preparation as an understudied yet significant bottleneck in study startup timelines. The researchers found that building source worksheets and case report forms can take a coordinator anywhere from 10 to 40 hours per study, with the range driven almost entirely by the coordinator's level of experience and clinical research expertise.

That range is not an anomaly or an outlier. It is the current state of a process that has never been systematically supported. A less experienced coordinator starting on a new study is not facing a productivity deficit. They are facing a task for which no dedicated tool has ever been built, working from a protocol written by a sponsor's clinical team for a regulatory audience, trying to extract and organise information that needs to end up as a structured, visit-by-visit data capture document that will be used in real time with patients.

10 to 40 hrs….

The time it takes a coordinator to build source worksheets and case report forms for a single study, depending on their experience and skill. This is time consumed before the first patient visit, competing with every other startup task in the queue.

Source case report forms, generated automatically from the protocol, are the next capability in the Clinials protocol intelligence platform.

Why the Range of 10 to 40 Hours Matters More Than Either Number Alone

The variation between 10 and 40 hours is the most important data point in this finding, because it reveals something structural about source document preparation that a single average cannot capture.

An experienced coordinator who has worked on similar studies, in similar therapeutic areas, with similar visit schedules, can use pattern recognition and accumulated knowledge to move faster. They know where in the protocol to look, what the CRF will likely require, and how to build worksheets that will hold up under monitoring. Their 10 hours reflects skill, familiarity, and an informal methodology built over years of practice.

A newer coordinator, or an experienced coordinator working in an unfamiliar therapeutic area or protocol structure, faces the same task without those advantages. Forty hours of coordinator time is a full working week. On a study with a tight activation timeline, that week is not available. Something gets compressed, something gets deferred, and source document quality suffers as a result.

The gap between 10 and 40 hours is a tooling problem rather than a training problem. No coordinator, regardless of experience, should be building source documents from scratch when the protocol already contains every piece of information required to generate them.

Clinials was built on exactly that premise. The protocol is not just a regulatory document. It is a structured data source from which every trial-ready document can be derived, provided a system exists to perform the extraction. Source CRFs generated by Clinials compress the range entirely. What takes between 10 and 40 hours manually takes minutes from a protocol upload.

What the Tufts CSDD and CRIO Research Actually Found

The collaborative study identified source document preparation as understudied relative to its actual impact on study startup timelines. This is significant, because the clinical research industry has produced extensive research on site selection, patient recruitment, protocol amendments, and regulatory submission timelines. Source document preparation has largely escaped systematic measurement, not because it is unimportant, but because it has been treated as an internal site task, invisible to sponsors, CROs, and the systems they use to manage studies.

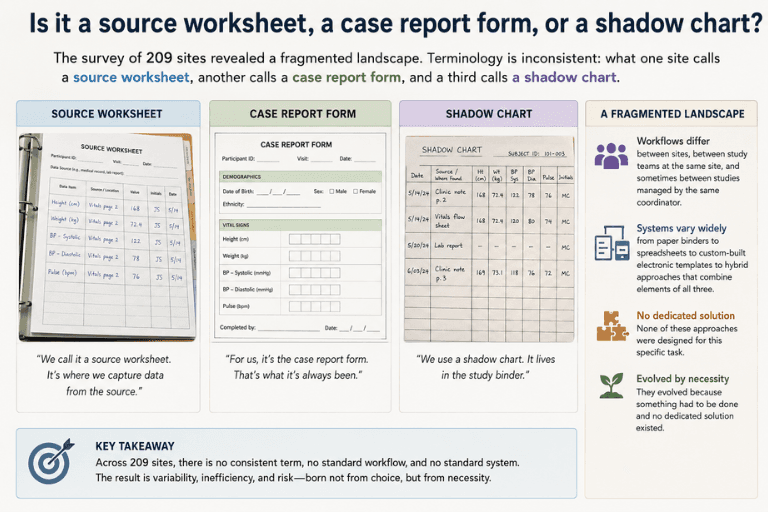

Is it a source worksheet, a case report form, or a shadow chart?

The survey of 209 sites revealed a fragmented landscape. Terminology is inconsistent: what one site calls a source worksheet, another calls a case report form, and a third calls a shadow chart. Workflows differ between sites, between study teams at the same site, and sometimes between studies managed by the same coordinator. Systems range from paper binders to spreadsheets to custom-built electronic templates to hybrid approaches that combine elements of all three. None of these approaches were designed for this specific task. They evolved because something had to be done and no dedicated solution existed.

The researchers identified this as an area holding key opportunities for efficiency gains. That framing understates the commercial and operational case. Source document preparation is not just an efficiency opportunity. It is a risk management imperative, a revenue capture issue for sites billing against incomplete SoA documentation, and a competitive differentiator for networks and CROs that can demonstrate faster, more consistent startup processes to sponsors.

The Quality Risk Hidden in the Time Variation

When source document preparation takes 40 hours instead of 10, the additional time does not always produce proportionally better documents. In many cases, a coordinator spending 40 hours on source documents is a coordinator who is less familiar with the task, less certain of the protocol requirements, and more likely to produce worksheets that miss data fields, misalign with the CRF, or fail to include the procedural context a coordinator needs during a patient visit.

Inadequate case histories are the second most cited deficiency in US FDA inspections of clinical investigator sites. That ranking reflects the downstream consequence of upstream document preparation problems. When source documents are incomplete, inconsistent, or misaligned with the protocol, the data entered into the CRF is unreliable. When CRF data is unreliable, monitoring queries accumulate. When monitoring queries accumulate, site performance degrades. When site performance degrades, sponsors deprioritise that site for future studies.

Source document worksheets that are not designed accurately to align with the protocol and CRF directly impact source data quality. When those worksheets are built manually, by coordinators working from protocols written for a different audience, with no dedicated tooling, the misalignment risk is structural rather than incidental. It happens on every study. It is not a training failure. It is an absence of the right tool for the task.

Why No Tool Has Existed for This Until Now

Source document preparation has always been treated as a site responsibility solved with site resources. Sponsors provide CRFs. Some sponsors provide source document templates, though quality and completeness vary widely.

No one provides a system that reads the protocol and generates the source document the site actually needs to run the study.

The clinical research technology stack was built around data after it is collected. EDC systems capture data. eTMF platforms store documents. CTMS dashboards track milestones. None of these systems generate source documents, because source documents sit upstream of all of them. They must exist before any other system can function, and their existence has always been assumed rather than supported.

This is a category gap, not a technology adoption failure. The category of protocol-to-source-document generation simply has not existed as a defined product space, because source document preparation has been classified as a site workflow rather than a systemic industry problem. The Tufts study is the first large-scale academic effort to classify it as the latter. The data makes the case that it has always been the latter.

What Clinials Source CRF Generation Changes

Clinials generates source case report forms directly from the protocol upload. The output is visit-structured, aligned to the Schedule of Activities, and CRF-compatible. What a coordinator currently spends 10 to 40 hours producing manually is available for review in the same session as the protocol upload.

For a site managing multiple concurrent studies, the compound value of that time saving is material. Ten to 40 hours per study, across a portfolio of five, ten, or twenty active studies, is a significant coordinator capacity variable. Clinials collapses that variable to near zero, freeing coordinator time for the patient-facing work that actually requires human judgment and clinical expertise.

For a site network distributing the same study to multiple sites, Clinials delivers consistent, protocol-accurate source documents to every site from the same upload. The quality variation that currently exists between an experienced coordinator and a newer one is replaced by a consistent, reviewable output that both can work from.

For CROs and sponsors, the source CRF joins a document suite that already generates Schedule of Activities reports, Study Summary for Feasibility documents, eligibility worksheets, Plain Language Summaries, and Informed Consent Forms from a single protocol upload. Sites that receive a complete, ready-to-review document package on the day the protocol arrives are activated faster, perform better under monitoring, and are more likely to be selected for future studies.

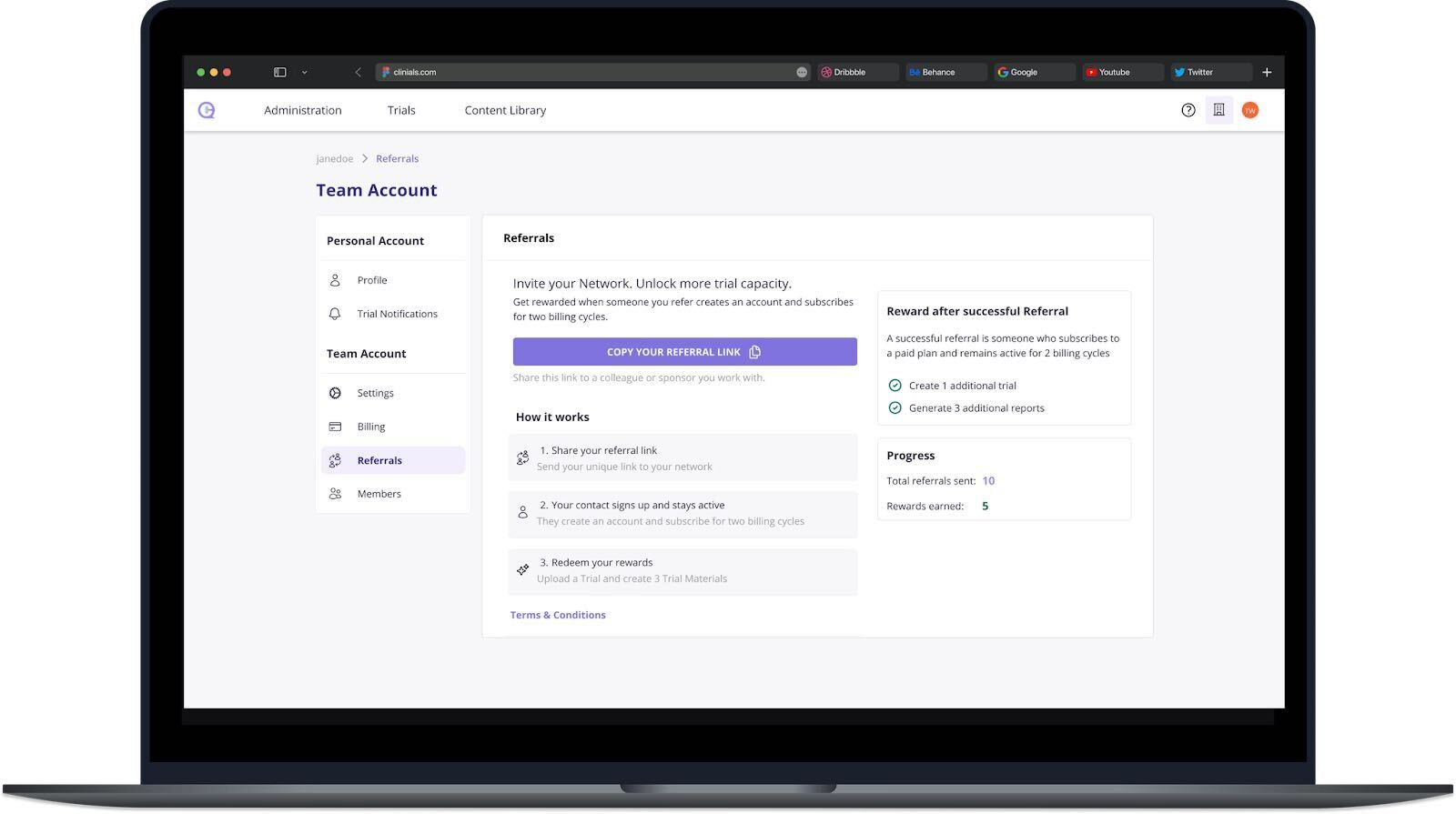

The Waitlist Is Open

Clinials is now accepting sites, site networks, and CROs onto the waitlist for source CRF capability. Access will be extended to a limited first cohort, with priority given to networks and sites currently using other Clinials outputs.

The Tufts study identified source document preparation as holding key opportunities for efficiency gains. Clinials is the efficiency gain. It is browser-based, requires no integration with any existing CTMS, EDC, or eTMF platform, and the first output gives the clearest possible comparison with the current manual process.